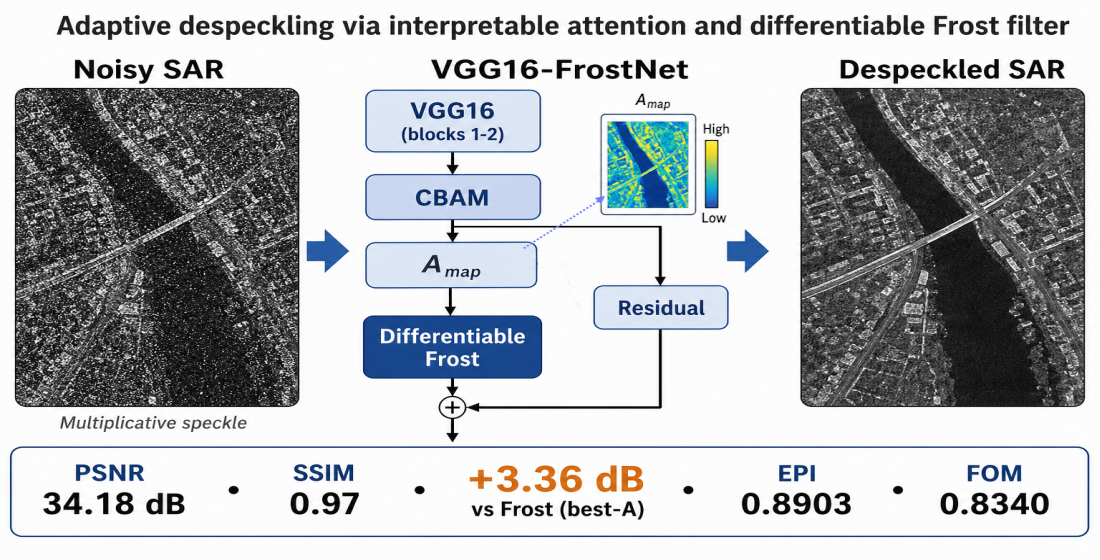

Development of a hybrid method VGG16-FrostNet for adaptive despeckling of synthetic aperture radar (SAR) images using attention mechanism and differentiable Frost filter

DOI:

https://doi.org/10.15587/2706-5448.2026.358316Keywords:

SAR images, speckle noise, suppression, Frost filter, VGG16, CBAM, deep learningAbstract

The object of research is the process of suppressing multiplicative speckle noise in synthetic aperture radar (SAR) images, which significantly complicates their analysis. The problem addressed is the lack of end-to-end hybrid methods capable of spatial adaptation by integrating a mathematical model of local statistics (the Frost filter) directly into the neural network computation graph. This research is aimed at automating the process of adaptive SAR image filtering by developing the hybrid VGG16-FrostNet method. These research tasks were addressed by formulating a differentiable mathematical model of the classical Frost filter for integration into a neural network, developing an architecture based on a pretrained VGG16 (Visual Geometry Group) backbone (blocks 1–2), and integrating the Convolutional Block Attention Module (CBAM), which predicts a spatially varying damping coefficient map Amap within 0.5–10.0 for each pixel. The developed hybrid architecture includes a residual branch for detail recovery and was optimized end-to-end using a comprehensive loss function combining L1, Edge Loss (Sobel), SSIM, and attention regularization. The model was trained on synthetic data with gamma-distributed speckle (equivalent looks between 3.0 and 6.0) under typical SAR conditions. On the test set, experimental evaluation yielded a mean PSNR of 34.18 dB and SSIM of 0.97. The gain relative to the noisy image constituted 9.45 dB, and 3.36 dB in PSNR compared to the classical Frost filter with an optimal static coefficient. Edge indicators EPI = 0.8903 and FOM = 0.8340 substantiate reliable preservation of structural boundaries. It was established that the developed hybrid method provides spatially adaptive damping with interpretable attention maps, enabling its deployment in automated SAR data processing pipelines.

References

- Oliver, C., Quegan, S. (2004). Understanding synthetic aperture radar images. SciTech Publishing, 479. Available at: https://books.google.com/books?id=0Ev3IoGbVSIC

- Argenti, F., Lapini, A., Bianchi, T., Alparone, L. (2013). A Tutorial on Speckle Reduction in Synthetic Aperture Radar Images. IEEE Geoscience and Remote Sensing Magazine, 1 (3), 6–35. https://doi.org/10.1109/mgrs.2013.2277512

- Touzi, R. (2002). A review of speckle filtering in the context of estimation theory. IEEE Transactions on Geoscience and Remote Sensing, 40 (11), 2392–2404. https://doi.org/10.1109/tgrs.2002.803727

- Fracastoro, G., Magli, E., Poggi, G., Scarpa, G., Valsesia, D., Verdoliva, L. (2021). Deep Learning Methods For Synthetic Aperture Radar Image Despeckling: An Overview Of Trends And Perspectives. IEEE Geoscience and Remote Sensing Magazine, 9 (2), 29–51. https://doi.org/10.1109/mgrs.2021.3070956

- Frost, V. S., Stiles, J. A., Shanmugan, K. S., Holtzman, J. C. (1982). A Model for Radar Images and Its Application to Adaptive Digital Filtering of Multiplicative Noise. IEEE Transactions on Pattern Analysis and Machine Intelligence, 4 (2), 157–166. https://doi.org/10.1109/tpami.1982.4767223

- Lee, J.-S. (1980). Digital Image Enhancement and Noise Filtering by Use of Local Statistics. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2 (2), 165–168. https://doi.org/10.1109/tpami.1980.4766994

- Kuan, D. T., Sawchuk, A. A., Strand, T. C., Chavel, P. (1985). Adaptive Noise Smoothing Filter for Images with Signal-Dependent Noise. IEEE Transactions on Pattern Analysis and Machine Intelligence, 7 (2), 165–177. https://doi.org/10.1109/tpami.1985.4767641

- Lopes, A., Touzi, R., Nezry, E. (1990). Adaptive speckle filters and scene heterogeneity. IEEE Transactions on Geoscience and Remote Sensing, 28 (6), 992–1000. https://doi.org/10.1109/36.62623

- Khudov, H., Makoveichuk, O., Tokarev, S., Andriushchenko, A., Pukhovyi, O., Rohulia, O. et al. (2026). Improving a method for filtering images acquired from a space-based radar observation system based on the Kuan algorithm. Eastern-European Journal of Enterprise Technologies, 1 (9 (139)), 40–46. https://doi.org/10.15587/1729-4061.2026.352347

- Filipponi, F. (2019). Sentinel-1 GRD Preprocessing Workflow. 3rd International Electronic Conference on Remote Sensing, 11. https://doi.org/10.3390/ecrs-3-06201

- Abramov, S., Krivenko, S., Roenko, A., Lukin, V., Djurovic, I., Chobanu, M. (2013). Prediction of filtering efficiency for DCT-based image denoising. 2013 2nd Mediterranean Conference on Embedded Computing (MECO). Budva: IEEE, 97–100. https://doi.org/10.1109/meco.2013.6601327

- Lukin, V. V., Abramov, S. K., Rubel, A., Krivenko, S. S., Naumenko, A., Vozel, B. et al. (2014). An approach to prediction of signal-dependent noise removal efficiency by dct-based filter. Telecommunications and Radio Engineering, 73 (18), 1645–1659. https://doi.org/10.1615/telecomradeng.v73.i18.40

- Rubel, O. S., Lukin, V. V., De Medeiros, F. S. (2015). Prediction of Despeckling Efficiency of DCT-Based Filters Applied to SAR Images. 2015 International Conference on Distributed Computing in Sensor Systems. Fortaleza: IEEE, 159–168. https://doi.org/10.1109/dcoss.2015.16

- Rubel, O., Lukin, V., Rubel, A., Egiazarian, K. (2019). NN-Based Prediction of Sentinel-1 SAR Image Filtering Efficiency. Geosciences, 9 (7), 290. https://doi.org/10.3390/geosciences9070290

- Rubel, O., Lukin, V., Rubel, A., Egiazarian, K. (2021). Selection of Lee Filter Window Size Based on Despeckling Efficiency Prediction for Sentinel SAR Images. Remote Sensing, 13 (10), 1887. https://doi.org/10.3390/rs13101887

- Rubel, O. S., Rubel, A. S., Lukin, V., Egiazarian, K. (2022). Optimal parameters selection of the Frost filter based on despeckling efficiency prediction for Sentinel SAR images. Electronic Imaging, 34 (10), 193-1-193–196. https://doi.org/10.2352/ei.2022.34.10.ipas-193

- Chierchia, G., Cozzolino, D., Poggi, G., Verdoliva, L. (2017). SAR image despeckling through convolutional neural networks. 2017 IEEE International Geoscience and Remote Sensing Symposium (IGARSS). Fort Worth: IEEE, 5438–5441. https://doi.org/10.1109/igarss.2017.8128234

- Zhang, K., Zuo, W., Chen, Y., Meng, D., Zhang, L. (2017). Beyond a Gaussian Denoiser: Residual Learning of Deep CNN for Image Denoising. IEEE Transactions on Image Processing, 26 (7), 3142–3155. https://doi.org/10.1109/tip.2017.2662206

- Dalsasso, E., Denis, L., Tupin, F. (2021). SAR2SAR: A Semi-Supervised Despeckling Algorithm for SAR Images. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 14, 4321–4329. https://doi.org/10.1109/jstars.2021.3071864

- Dalsasso, E., Yang, X., Denis, L., Tupin, F., Yang, W. (2020). SAR Image Despeckling by Deep Neural Networks: from a Pre-Trained Model to an End-to-End Training Strategy. Remote Sensing, 12 (16), 2636. https://doi.org/10.3390/rs12162636

- Russakovsky, O., Deng, J., Su, H., Krause, J., Satheesh, S., Ma, S. et al. (2015). ImageNet Large Scale Visual Recognition Challenge. International Journal of Computer Vision, 115 (3), 211–252. https://doi.org/10.1007/s11263-015-0816-y

- Simonyan, K., Zisserman, A. (2015). Very deep convolutional networks for large-scale image recognition. Proceedings of the International Conference on Learning Representations (ICLR). arXiv. Available at: https://arxiv.org/abs/1409.1556

- Vitale, S., Ferraioli, G., Pascazio, V. (2021). Multi-Objective CNN-Based Algorithm for SAR Despeckling. IEEE Transactions on Geoscience and Remote Sensing, 59 (11), 9336–9349. https://doi.org/10.1109/tgrs.2020.3034852

- Moreira, A., Prats-Iraola, P., Younis, M., Krieger, G., Hajnsek, I., Papathanassiou, K. P. (2013). A tutorial on synthetic aperture radar. IEEE Geoscience and Remote Sensing Magazine, 1 (1), 6–43. https://doi.org/10.1109/mgrs.2013.2248301

- Yosinski, J., Clune, J., Bengio, Y., Lipson, H. (2014). How transferable are features in deep neural networks? Advances in Neural Information Processing Systems (NeurIPS), 27. Available at: https://papers.nips.cc/paper/5347-how-transferable-are-features-in-deep-neural-networks

- Pan, S. J., Yang, Q. (2010). A Survey on Transfer Learning. IEEE Transactions on Knowledge and Data Engineering, 22 (10), 1345–1359. https://doi.org/10.1109/tkde.2009.191

- Woo, S., Park, J., Lee, J.-Y., Kweon, I. S. (2018). CBAM: Convolutional Block Attention Module. Computer Vision – ECCV 2018, 3–19. https://doi.org/10.1007/978-3-030-01234-2_1

- Hu, J., Shen, L., Sun, G. (2018). Squeeze-and-Excitation Networks. 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Salt Lake City: IEEE, 7132–7141. https://doi.org/10.1109/cvpr.2018.00745

- Wang, X., Girshick, R., Gupta, A., He, K. (2018). Non-local Neural Networks. 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Salt Lake City: IEEE, 7794–7803. https://doi.org/10.1109/cvpr.2018.00813

- Al-Senaikh, R., Rubel, O. (2025). Predicting Filtered Image Quality Using Transfer Learning on Sentinel-1 Speckle Noise with DenseNet-121. Ukrainian Journal of Remote Sensing, 12 (4), 4–15. https://doi.org/10.36023/ujrs.2025.12.4.293

- Wang, Z., Bovik, A. C., Sheikh, H. R., Simoncelli, E. P. (2004). Image quality assessment: from error visibility to structural similarity. IEEE Transactions on Image Processing, 13 (4), 600–612. https://doi.org/10.1109/tip.2003.819861

- Wang, Z., Simoncelli, E. P., Bovik, A. C. (2003). Multiscale structural similarity for image quality assessment. The Thrity-Seventh Asilomar Conference on Signals, Systems & Computers, 2003. Pacific Grove: IEEE, 1398–1402. https://doi.org/10.1109/acssc.2003.1292216

- Zhang, L., Zhang, L., Mou, X., Zhang, D. (2011). FSIM: A Feature Similarity Index for Image Quality Assessment. IEEE Transactions on Image Processing, 20 (8), 2378–2386. https://doi.org/10.1109/tip.2011.2109730

- Reisenhofer, R., Bosse, S., Kutyniok, G., Wiegand, T. (2018). A Haar wavelet-based perceptual similarity index for image quality assessment. Signal Processing: Image Communication, 61, 33–43. https://doi.org/10.1016/j.image.2017.11.001

- Nafchi, H. Z., Shahkolaei, A., Hedjam, R., Cheriet, M. (2016). Mean Deviation Similarity Index: Efficient and Reliable Full-Reference Image Quality Evaluator. IEEE Access, 4, 5579–5590. https://doi.org/10.1109/access.2016.2604042

- Sattar, F., Floreby, L., Salomonsson, G., Lovstrom, B. (1997). Image enhancement based on a nonlinear multiscale method. IEEE Transactions on Image Processing, 6 (6), 888–895. https://doi.org/10.1109/83.585239

- Abdou, I. E., Pratt, W. K. (1979). Quantitative design and evaluation of enhancement/thresholding edge detectors. Proceedings of the IEEE, 67 (5), 753–763. https://doi.org/10.1109/proc.1979.11325

- Mathieu, M., Couprie, C., LeCun, Y. (2016). Deep multi-scale video prediction beyond mean square error. Proceedings of the International Conference on Learning Representations (ICLR). arXiv. https://doi.org/10.48550/arXiv.1511.05440

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Raed Al-Senaikh, Oleksii Rubel

This work is licensed under a Creative Commons Attribution 4.0 International License.

The consolidation and conditions for the transfer of copyright (identification of authorship) is carried out in the License Agreement. In particular, the authors reserve the right to the authorship of their manuscript and transfer the first publication of this work to the journal under the terms of the Creative Commons CC BY license. At the same time, they have the right to conclude on their own additional agreements concerning the non-exclusive distribution of the work in the form in which it was published by this journal, but provided that the link to the first publication of the article in this journal is preserved.