Revealing intrinsic dimensionality patterns in semantic spaces of natural languages using graph algorithms

DOI:

https://doi.org/10.15587/1729-4061.2026.351509Keywords:

intrinsic dimensionality, semantic spaces, graph algorithms, fractal structure, vector representationsAbstract

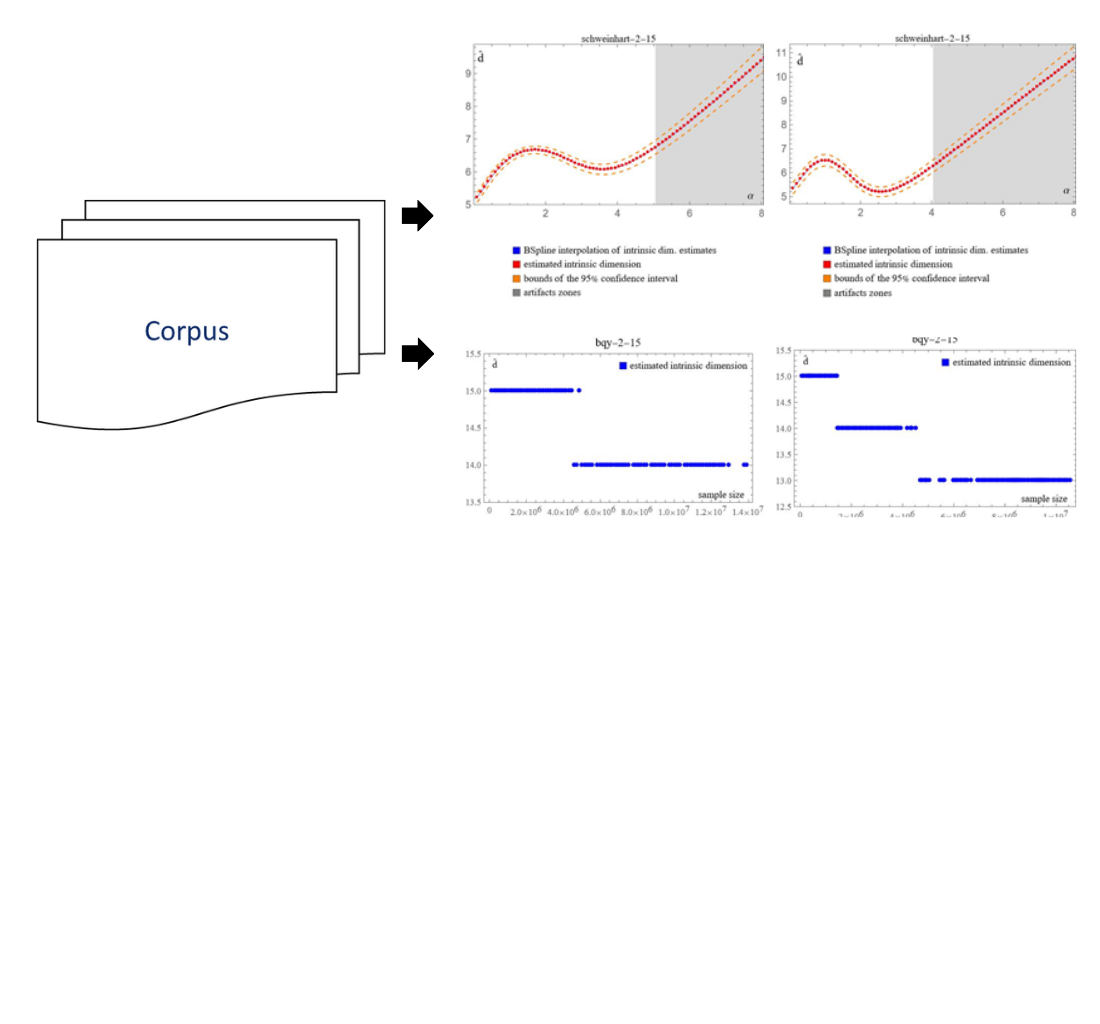

This study considers semantic spaces of n-grams (unigrams, bigrams, and trigrams) formed from natural language texts. The problem under consideration is related to the limitations of conventional approaches, which use semantic spaces of a fixed high dimensionality without taking into account their internal geometric structure. An experimental study of the internal dimensionality of vector representations of linguistic objects used in natural language processing tasks was conducted.

To solve the set task, graph algorithms for estimating internal dimension were applied. These algorithms are based on the analysis of minimum spanning tree statistics, allowing for estimates of both Hausdorff and topological dimensionalities. The experimental studies were conducted on corpora from national literatures in six languages – Russian, English, Kazakh, Kyrgyz, Tatar, and Uzbek – belonging to different typological groups. Vector representations of n-grams were formed using singular value decomposition of the context matrix, which allowed the dimensionality of embedding spaces to be varied without retraining the models.

The results revealed consistent differences in the intrinsic dimensionalities of semantic spaces of the studied languages and confirmed their multifractal nature. Interpretation of the findings suggests that the identified differences are due to the typological and structural features of the languages. The obtained estimates are robust to noise and changes in the dimensionality of the embedding space, ensuring the reproducibility of the results.

The practical significance of this work relates to the possibility of using intrinsic dimensionality as an engineering parameter in the design and optimization of natural language processing systems to reduce computational and resource costs

References

- Peters, M., Neumann, M., Iyyer, M., Gardner, M., Clark, C., Lee, K., Zettlemoyer, L. (2018). Deep Contextualized Word Representations. Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long Papers), 2227–2237. https://doi.org/10.18653/v1/n18-1202

- Devlin, J., Chang, M.-W., Lee, K., Toutanova, K. (2018). BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. arXiv. https://doi.org/10.48550/arXiv.1810.04805

- Brown, T. B., Mann, B., Ryder, N., Subbiah, M., Kaplan, J., Dhariwal, P. et al. (2020). Language Models are Few-Shot Learners. arXiv. https://arxiv.org/abs/2005.14165

- Dębowski, Ł. (2020). Information Theory Meets Power Laws. John Wiley & Sons. https://doi.org/10.1002/9781119625384

- Tanaka-Ishii, K. (2021). Language as a Complex System. Statistical Universals of Language, 19–30. https://doi.org/10.1007/978-3-030-59377-3_3

- Semple, S., Ferrer-i-Cancho, R., Gustison, M. L. (2022). Linguistic laws in biology. Trends in Ecology & Evolution, 37 (1), 53–66. https://doi.org/10.1016/j.tree.2021.08.012

- Gromov, V. A., Migrina, A. M. (2017). A Language as a Self-Organized Critical System. Complexity, 2017, 1–7. https://doi.org/10.1155/2017/9212538

- Malinetsky, G. G., Potapov, A. B. (2000). Sovremennye problemy nelineinoi dinamiki. Moscow: Editorial URSS.

- Pestov, V. (2007). Intrinsic dimension of a dataset: what properties does one expect? 2007 International Joint Conference on Neural Networks, 2959–2964. https://doi.org/10.1109/ijcnn.2007.4371431

- Gromov, M. (2007). Metric Structures for Riemannian and Non-Riemannian Spaces. Birkhäuser, 586. https://doi.org/10.1007/978-0-8176-4583-0

- Kantz, H., Schreiber, T. (2003). Nonlinear Time Series Analysis. https://doi.org/10.1017/cbo9780511755798

- Panda, S. K., Nagy, A. M., Vijayakumar, V., Hazarika, B. (2023). Stability analysis for complex-valued neural networks with fractional order. Chaos, Solitons & Fractals, 175, 114045. https://doi.org/10.1016/j.chaos.2023.114045

- Brito, M. R., Quiroz, A. J., Yukich, J. E. (2013). Intrinsic dimension identification via graph-theoretic methods. Journal of Multivariate Analysis, 116, 263–277. https://doi.org/10.1016/j.jmva.2012.12.007

- Adams, H., Aminian, M., Farnell, E., Kirby, M., Mirth, J., Neville, R. et al. (2020). A Fractal Dimension for Measures via Persistent Homology. Topological Data Analysis, 1–31. https://doi.org/10.1007/978-3-030-43408-3_1

- Golub, G., Kahan, W. (1965). Calculating the Singular Values and Pseudo-Inverse of a Matrix. Journal of the Society for Industrial and Applied Mathematics Series B Numerical Analysis, 2 (2), 205–224. https://doi.org/10.1137/0702016

- Bellegarda, J. R. (2007). Latent Semantic Mapping. Latent Semantic Mapping: Principles & Applications, 9–13. https://doi.org/10.1007/978-3-031-02556-3_2

- Kalman, D. (1996). A Singularly Valuable Decomposition: The SVD of a Matrix. The College Mathematics Journal, 27 (1), 2–23. https://doi.org/10.1080/07468342.1996.11973744

- Schweinhart, B. (2020). Fractal dimension and the persistent homology of random geometric complexes. Advances in Mathematics, 372, 107291. https://doi.org/10.1016/j.aim.2020.107291

- Steele, J. M. (1988). Growth Rates of Euclidean Minimal Spanning Trees with Power Weighted Edges. The Annals of Probability, 16 (4). https://doi.org/10.1214/aop/1176991596

- Gromov, V. A., Borodin, N. S., Yerbolova, A. S. (2024). A Language and Its Dimensions: Intrinsic Dimensions of Language Fractal Structures. Complexity, 2024 (1). https://doi.org/10.1155/2024/8863360

- Kuznetsov, S. O., Gromov, V. A., Borodin, N. S., Divavin, A. M. (2023). Formal Concept Analysis for Evaluating Intrinsic Dimension of a Natural Language. Pattern Recognition and Machine Intelligence, 331–339. https://doi.org/10.1007/978-3-031-45170-6_34

- Kuznetsov, S. O. (2009). Pattern Structures for Analyzing Complex Data. Rough Sets, Fuzzy Sets, Data Mining and Granular Computing, 33–44. https://doi.org/10.1007/978-3-642-10646-0_4

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Assel S. Yerbolova, Ildar G. Kurmashev

This work is licensed under a Creative Commons Attribution 4.0 International License.

The consolidation and conditions for the transfer of copyright (identification of authorship) is carried out in the License Agreement. In particular, the authors reserve the right to the authorship of their manuscript and transfer the first publication of this work to the journal under the terms of the Creative Commons CC BY license. At the same time, they have the right to conclude on their own additional agreements concerning the non-exclusive distribution of the work in the form in which it was published by this journal, but provided that the link to the first publication of the article in this journal is preserved.

A license agreement is a document in which the author warrants that he/she owns all copyright for the work (manuscript, article, etc.).

The authors, signing the License Agreement with TECHNOLOGY CENTER PC, have all rights to the further use of their work, provided that they link to our edition in which the work was published.

According to the terms of the License Agreement, the Publisher TECHNOLOGY CENTER PC does not take away your copyrights and receives permission from the authors to use and dissemination of the publication through the world's scientific resources (own electronic resources, scientometric databases, repositories, libraries, etc.).

In the absence of a signed License Agreement or in the absence of this agreement of identifiers allowing to identify the identity of the author, the editors have no right to work with the manuscript.

It is important to remember that there is another type of agreement between authors and publishers – when copyright is transferred from the authors to the publisher. In this case, the authors lose ownership of their work and may not use it in any way.