Development of multi-agent generative pipelines framework for learning plan generation with deterministic constraint verification

DOI:

https://doi.org/10.15587/1729-4061.2026.356830Keywords:

multi-agent, generative AI, structured generation, constraint validation, ablation analysisAbstract

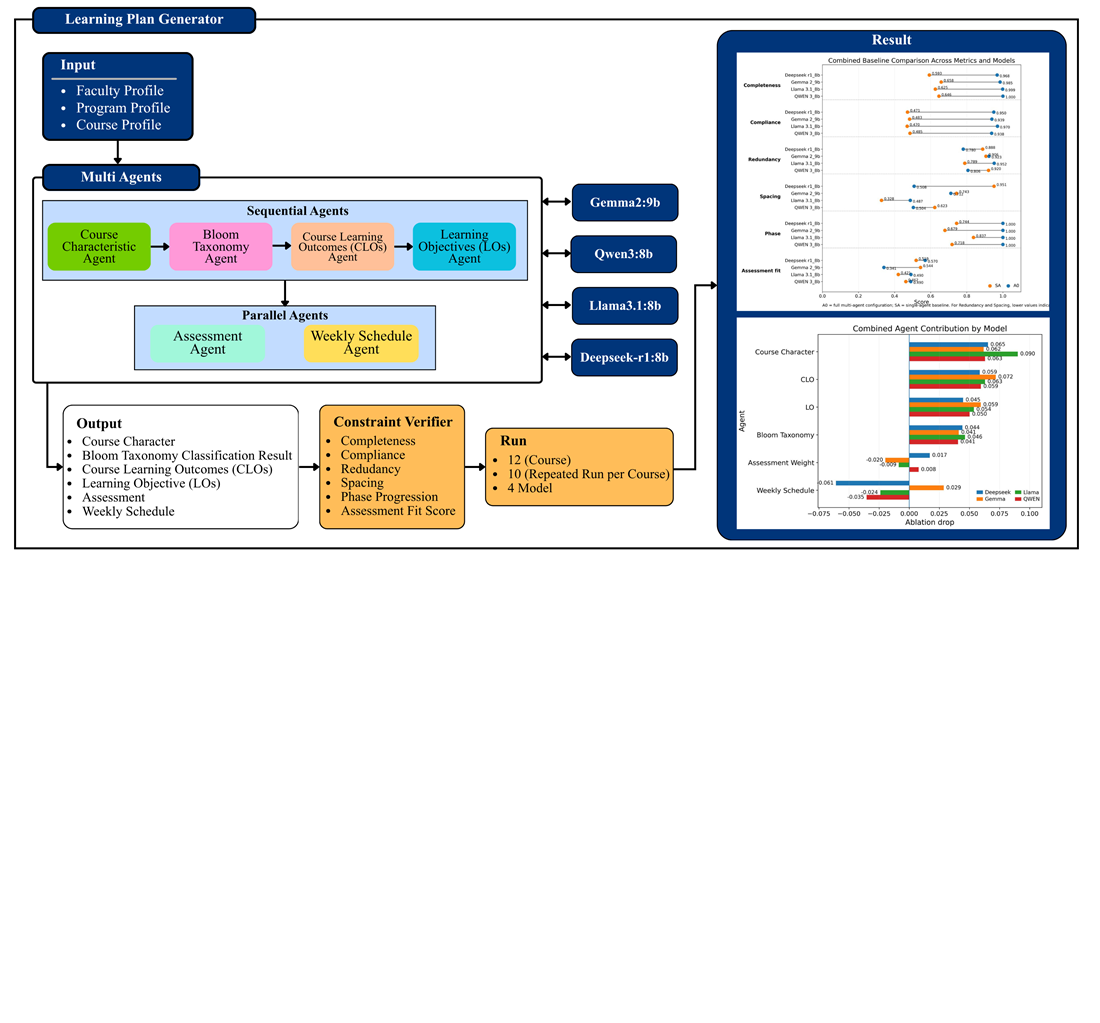

Large language models (LLMs) are increasingly used to generate structured learning plans aligned with outcome-based education (OBE). The object of the study is a multi-agent workflow for generating a structured OBE learning-plan package with a final deterministic verification stage. The problem addressed is the low reliability of LLM-generated outputs, which frequently violate schema rules, numeric constraints, and cross-artifact consistency requirements. To solve this problem, a multi-agent generative pipeline is proposed, decomposing the task into six specialized agents followed by deterministic constraint verification applied to the final artifact bundle. Structural reliability is measured using completeness and compliance, while cross-artifact coherence is evaluated through redundancy, spacing, phase progression, and assessment fit. The evaluation involves 12 courses with 10 repeated runs per course (120 runs per variant) across four different LLMs to assess cross-model robustness. The results show that the multi-agent pipeline achieves completeness of 0.9682–1.00 and compliance of 0.9376–0.9698, significantly outperforming the single-agent configuration (completeness 0.5926–0.6580; compliance 0.4698–0.4853). These improvements are explained by task decomposition, which reduces structural failure propagation, and deterministic verification, which rejects invalid outputs and preserves referential integrity. Ablation analysis indicates that the Course Character agent exerts the highest impact on overall performance. The proposed framework can be applied in higher education curriculum planning under OBE conditions, using minimal course metadata and producing machine-verifiable structured artifacts

References

- Derouich, M. (2025). Ensuring outcome-based curriculum coherence through systematic CLO–PLO alignment and feedback loops. Discover Education, 4 (1). https://doi.org/10.1007/s44217-025-00915-7

- Vlachopoulos, D., Makri, A. (2024). A systematic literature review on authentic assessment in higher education: Best practices for the development of 21st century skills, and policy considerations. Studies in Educational Evaluation, 83, 101425. https://doi.org/10.1016/j.stueduc.2024.101425

- Baig, M. I., Yadegaridehkordi, E. (2024). ChatGPT in the higher education: A systematic literature review and research challenges. International Journal of Educational Research, 127, 102411. https://doi.org/10.1016/j.ijer.2024.102411

- Belkina, M., Daniel, S., Nikolic, S., Haque, R., Lyden, S., Neal, P. et al. (2025). Implementing generative AI (GenAI) in higher education: A systematic review of case studies. Computers and Education: Artificial Intelligence, 8, 100407. https://doi.org/10.1016/j.caeai.2025.100407

- Kasneci, E., Sessler, K., Küchemann, S., Bannert, M., Dementieva, D., Fischer, F. et al. (2023). ChatGPT for good? On opportunities and challenges of large language models for education. Learning and Individual Differences, 103, 102274. https://doi.org/10.1016/j.lindif.2023.102274

- Moundridou, M., Matzakos, N., Doukakis, S. (2024). Generative AI tools as educators’ assistants: Designing and implementing inquiry-based lesson plans. Computers and Education: Artificial Intelligence, 7, 100277. https://doi.org/10.1016/j.caeai.2024.100277

- Celik, I., Kontkanen, S., Laru, J., Dalyanci, A. A. (2026). Co-constructing adaptive lesson plans with GenAI: Pre-service teachers’ Intelligent-TPACK and prompt engineering strategies. Computers & Education, 241, 105485. https://doi.org/10.1016/j.compedu.2025.105485

- Moorhouse, B. L. (2024). Beginning and first-year language teachers’ readiness for the generative AI age. Computers and Education: Artificial Intelligence, 6, 100201. https://doi.org/10.1016/j.caeai.2024.100201

- Kong, S. C., Yang, Y., Hou, C. (2024). Examining teachers’ behavioural intention of using generative artificial intelligence tools for teaching and learning based on the extended technology acceptance model. Computers and Education: Artificial Intelligence, 7, 100328. https://doi.org/10.1016/j.caeai.2024.100328

- Mzwri, K., Turcsányi-Szabo, M. (2025). Bridging LMS and generative AI: dynamic course content integration (DCCI) for enhancing student satisfaction and engagement via the ask ME assistant. Journal of Computers in Education. https://doi.org/10.1007/s40692-025-00367-w

- Zhang, L., Yao, Z., Hadizadeh Moghaddam, A. (2025). Designing GenAI Tools for Personalized Learning Implementation: Theoretical Analysis and Prototype of a Multi-Agent System. Journal of Teacher Education, 76 (3), 280–293. https://doi.org/10.1177/00224871251325109

- Li, Q., Xie, Y., Chakravarty, S., Lee, D. (2024). EduMAS: A Novel LLM-Powered Multi-Agent Framework for Educational Support. 2024 IEEE International Conference on Big Data (BigData), 8309–8316. https://doi.org/10.1109/bigdata62323.2024.10826103

- Hauk, D., Soujon, N. (2026). How reliable are large language models in analyzing the quality of written lesson plans? A mixed-methods study from a teacher internship program. Computers and Education: Artificial Intelligence, 10, 100538. https://doi.org/10.1016/j.caeai.2025.100538

- Lin, Z., Guan, S., Zhang, W., Zhang, H., Li, Y., Zhang, H. (2024). Towards trustworthy LLMs: a review on debiasing and dehallucinating in large language models. Artificial Intelligence Review, 57 (9). https://doi.org/10.1007/s10462-024-10896-y

- Shen, Z., Wang, D. Y.-B., Mishra, S. S., Xu, Z., Teng, Y., Ding, H. (2025). SLOT: Structuring the Output of Large Language Models. Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing: Industry Track, 472–491. https://doi.org/10.18653/v1/2025.emnlp-industry.32

- Raspanti, F., Ozcelebi, T., Holenderski, M. (2025). Grammar-Constrained Decoding Makes Large Language Models Better Logical Parsers. Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 6: Industry Track), 485–499. https://doi.org/10.18653/v1/2025.acl-industry.34

- Boud, D., Soler, R. (2015). Sustainable assessment revisited. Assessment & Evaluation in Higher Education, 41 (3), 400–413. https://doi.org/10.1080/02602938.2015.1018133

- Sweller, J., van Merriënboer, J. J. G., Paas, F. (2019). Cognitive Architecture and Instructional Design: 20 Years Later. Educational Psychology Review, 31 (2), 261–292. https://doi.org/10.1007/s10648-019-09465-5

- Pereira, E., Nsair, S., Pereira, L. R., Grant, K. (2024). Constructive alignment in a graduate-level project management course: an innovative framework using large language models. International Journal of Educational Technology in Higher Education, 21 (1). https://doi.org/10.1186/s41239-024-00457-2

- Kilinc, C., Ranaweera, C., Ugon, J., Cain, A., Pierce, C. (2025). Leveraging NLP-based tools for constructive alignment. ASCILITE Publications, 157–166. https://doi.org/10.65106/apubs.2025.2636

- Almatrafi, O., Johri, A. (2025). Leveraging generative AI for course learning outcome categorization using Bloom’s taxonomy. Computers and Education: Artificial Intelligence, 8, 100404. https://doi.org/10.1016/j.caeai.2025.100404

- Khan, H. F., Qayyum, S., Beenish, H., Khan, R. A., Iltaf, S., Faysal, L. R. (2025). Determining the alignment of assessment items with curriculum goals through document analysis by addressing identified item flaws. BMC Medical Education, 25 (1). https://doi.org/10.1186/s12909-025-06736-4

- Gemma: Open Models Based on Gemini Research and Technology (2024). arXiv. Available at: https://arxiv.org/html/2403.08295v1

- Gao, H., Hashim, H., Md Yunus, M. (2025). Assessing the reliability and relevance of DeepSeek in EFL writing evaluation: a generalizability theory approach. Language Testing in Asia, 15 (1). https://doi.org/10.1186/s40468-025-00369-6

- Neyem, A., González, L. A., Mendoza, M., Alcocer, J. P. S., Centellas, L., Paredes, C. (2024). Toward an AI Knowledge Assistant for Context-Aware Learning Experiences in Software Capstone Project Development. IEEE Transactions on Learning Technologies, 17, 1599–1614. https://doi.org/10.1109/tlt.2024.3396735

- Zhao, Q., Zhang, M. (2025). Elimination-based reasoning with LLM for multiple-choice educational question answering. Journal of King Saud University Computer and Information Sciences, 37 (7). https://doi.org/10.1007/s44443-025-00122-2

- Stamov Roßnagel, C., Lo Baido, K., Fitzallen, N. (2021). Revisiting the relationship between constructive alignment and learning approaches: A perceived alignment perspective. PLOS ONE, 16 (8), e0253949. https://doi.org/10.1371/journal.pone.0253949

- Dagdelen, J., Dunn, A., Lee, S., Walker, N., Rosen, A. S., Ceder, G. et al. (2024). Structured information extraction from scientific text with large language models. Nature Communications, 15 (1). https://doi.org/10.1038/s41467-024-45563-x

- Balasubramanian, J. B., Adams, D., Roxanis, I., de Gonzalez, A. B., Coulson, P., Almeida, J. S., García-Closas, M. (2025). Leveraging large language models for structured information extraction from pathology reports. Journal of Pathology Informatics, 19, 100521. https://doi.org/10.1016/j.jpi.2025.100521

- Hu, R., Yang, Y., Liu, S., Li, Z., Liu, J., Ding, X. et al. (2025). Large language model driven transferable key information extraction mechanism for nonstandardized tables. Scientific Reports, 15 (1). https://doi.org/10.1038/s41598-025-15627-z

- Yuan, C., Huang, H., Cao, Y., Cao, Q. (2024). Screening through a broad pool: Towards better diversity for lexically constrained text generation. Information Processing & Management, 61 (2), 103602. https://doi.org/10.1016/j.ipm.2023.103602

- Guo, Y., Shang, G., Clavel, C. (2025). Benchmarking Linguistic Diversity of Large Language Models. Transactions of the Association for Computational Linguistics, 13, 1507–1526. https://doi.org/10.1162/tacl.a.47

- Tractenberg, R. E. (2021). The Assessment Evaluation Rubric: Promoting Learning and Learner-Centered Teaching through Assessment in Face-to-Face or Distanced Higher Education. Education Sciences, 11 (8), 441. https://doi.org/10.3390/educsci11080441

- Xia, Q., Weng, X., Ouyang, F., Lin, T. J., Chiu, T. K. F. (2024). A scoping review on how generative artificial intelligence transforms assessment in higher education. International Journal of Educational Technology in Higher Education, 21 (1). https://doi.org/10.1186/s41239-024-00468-z

- Oprea, S.-V., Bâra, A. (2025). Transforming Education With Large Language Models: Trends, Themes, and Untapped Potential. IEEE Access, 13, 87292–87312. https://doi.org/10.1109/access.2025.3570649

- Li, G., Al Kader Hammoud, H. A., Itani, H., Khizbullin, D., Ghanem, B. (). CAMEL: Communicative Agents for "Mind" Exploration of Large Language Model Society. arXiv. https://doi.org/10.48550/arXiv.2303.17760

- Park, J. S., O’Brien, J., Cai, C. J., Morris, M. R., Liang, P., Bernstein, M. S. (2023). Generative Agents: Interactive Simulacra of Human Behavior. Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology, 1–22. https://doi.org/10.1145/3586183.3606763

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Mohammad Fadly Syahputra, Opim Salim Sitompul, Fahmi Fahmi, Maya Silvi Lydia, Pauzi Ibrahim Nainggolan, Rendra Mahardika, Riza Sulaiman

This work is licensed under a Creative Commons Attribution 4.0 International License.

The consolidation and conditions for the transfer of copyright (identification of authorship) is carried out in the License Agreement. In particular, the authors reserve the right to the authorship of their manuscript and transfer the first publication of this work to the journal under the terms of the Creative Commons CC BY license. At the same time, they have the right to conclude on their own additional agreements concerning the non-exclusive distribution of the work in the form in which it was published by this journal, but provided that the link to the first publication of the article in this journal is preserved.

A license agreement is a document in which the author warrants that he/she owns all copyright for the work (manuscript, article, etc.).

The authors, signing the License Agreement with TECHNOLOGY CENTER PC, have all rights to the further use of their work, provided that they link to our edition in which the work was published.

According to the terms of the License Agreement, the Publisher TECHNOLOGY CENTER PC does not take away your copyrights and receives permission from the authors to use and dissemination of the publication through the world's scientific resources (own electronic resources, scientometric databases, repositories, libraries, etc.).

In the absence of a signed License Agreement or in the absence of this agreement of identifiers allowing to identify the identity of the author, the editors have no right to work with the manuscript.

It is important to remember that there is another type of agreement between authors and publishers – when copyright is transferred from the authors to the publisher. In this case, the authors lose ownership of their work and may not use it in any way.