Improving the efficiency and accuracy of classification of recipients of the free nutrition food program through the application of knowledge distillation in a convolutional neural network algorithm

DOI:

https://doi.org/10.15587/1729-4061.2026.357493Keywords:

Food Program, CNN, student distillation model, classification systemAbstract

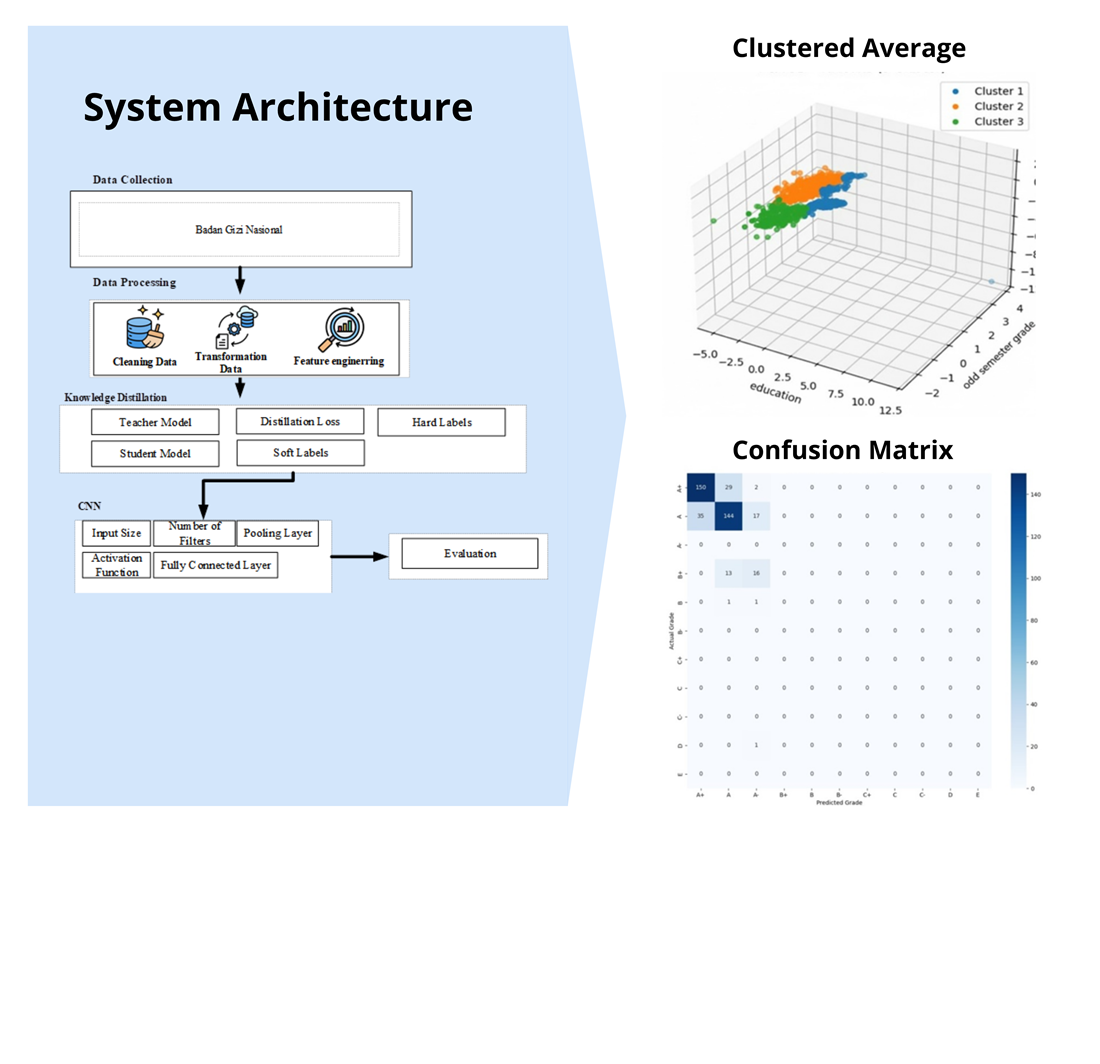

The object of this study is a deep learning-based classification system applied to recipients of the Free Nutritional Food Program, using recipient data as a representation of the eligibility determination process in providing social assistance. The problem to be solved is the high computational complexity of large-capacity convolutional neural networks (CNN) models which, despite their high accuracy, require significant computational resources and are therefore less than optimal for large-scale implementation. To overcome this, this study applies the Knowledge Distillation method, utilizing a large-capacity CNN as the teacher model and a lightweight architecture CNN as the student model through soft label-based knowledge transfer. According to this study, it is shown that the student model generated by distillation is about 90–93% accurate. Also, this figure is very close to that of the teacher model (from 92–95%) and much better than that of a CNN without distillation model (85–88%). This is an improvement since this distillation method can transfer information in the form of richer probabilities than simply hard labels as done do in traditional training. The model proposed in this work has many advantages such as higher accuracy, more compact size, and faster inference times. These features help in making the classification process computationally less intensive. Furthermore, this leads to more efficient memory use and lower energy consumption. These results could be applied in many deep learning classification systems, particularly resource limits devices. Such application is observable in the implementation of Free Nutritious Food Program under real life conditions which requires better accuracy with no loss on efficiency

References

- Arnita, A., Marpaung, F., Koemadji, Z. A., Hidayat, M., Widianto, A., Aulia, F. (2023). Selection of Food Identification System Features Using Convolutional Neural Network (CNN) Method. Scientific Journal of Informatics, 10 (2), 205–216. https://doi.org/10.15294/sji.v10i2.44059

- Greco, A., Saggese, A., Vento, M., Vigilante, V. (2021). Effective training of convolutional neural networks for age estimation based on knowledge distillation. Neural Computing and Applications, 34 (24), 21449–21464. https://doi.org/10.1007/s00521-021-05981-0

- Yang, H., Zhang, Y., Yin, C., Ding, W. (2022). Ultra-lightweight CNN design based on neural architecture search and knowledge distillation: A novel method to build the automatic recognition model of space target ISAR images. Defence Technology, 18 (6), 1073–1095. https://doi.org/10.1016/j.dt.2021.04.014

- Li, Y., Luo, J., Zhang, J. (2022). Classification of Alzheimer’s disease in MRI images using knowledge distillation framework: an investigation. International Journal of Computer Assisted Radiology and Surgery, 17 (7), 1235–1243. https://doi.org/10.1007/s11548-022-02661-9

- Song, H., Yuan, Y., Ouyang, Z., Yang, Y., Xiang, H. (2024). Efficient knowledge distillation for hybrid models: A vision transformer‐convolutional neural network to convolutional neural network approach for classifying remote sensing images. IET Cyber-Systems and Robotics, 6 (3). https://doi.org/10.1049/csy2.12120

- Cho, J., Lee, M. (2019). Building a Compact Convolutional Neural Network for Embedded Intelligent Sensor Systems Using Group Sparsity and Knowledge Distillation. Sensors, 19 (19), 4307. https://doi.org/10.3390/s19194307

- Ding, Z., Yang, C., Hu, B., Guo, M., Li, J., Wang, M. et al. (2024). Lightweight CNN combined with knowledge distillation for the accurate determination of black tea fermentation degree. Food Research International, 194, 114929. https://doi.org/10.1016/j.foodres.2024.114929

- Ullah, H., Munir, A. (2023). A 3DCNN-Based Knowledge Distillation Framework for Human Activity Recognition. Journal of Imaging, 9 (4), 82. https://doi.org/10.3390/jimaging9040082

- Ji, M., Peng, G., Li, S., Cheng, F., Chen, Z., Li, Z., Du, H. (2022). A neural network compression method based on knowledge-distillation and parameter quantization for the bearing fault diagnosis. Applied Soft Computing, 127, 109331. https://doi.org/10.1016/j.asoc.2022.109331

- MohiEldeen Alabbasy, F., Abohamama, A. S., Alrahmawy, M. F. (2023). Compressing medical deep neural network models for edge devices using knowledge distillation. Journal of King Saud University - Computer and Information Sciences, 35 (7), 101616. https://doi.org/10.1016/j.jksuci.2023.101616

- Hinton, G., Vinyals, O., Dean, J. (2015). Distilling The Knowledge In A Neural Network. arXiv. https://doi.org/10.48550/arXiv.1503.02531

- Aghli, N., Ribeiro, E. (2021). Combining Weight Pruning and Knowledge Distillation For CNN Compression. 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), 3185–3192. https://doi.org/10.1109/cvprw53098.2021.00356

- Mazlan, A. B., Ng, Y. H., Tan, C. K. (2022). A Fast Indoor Positioning Using a Knowledge-Distilled Convolutional Neural Network (KD-CNN). IEEE Access, 10, 65326–65338. https://doi.org/10.1109/access.2022.3183113

- Chen, W., Gao, L., Li, X., Shen, W. (2022). Lightweight convolutional neural network with knowledge distillation for cervical cells classification. Biomedical Signal Processing and Control, 71, 103177. https://doi.org/10.1016/j.bspc.2021.103177

- Song, H., Wei, C., Yong, Z. (2023). Efficient knowledge distillation for remote sensing image classification: a CNN-based approach. International Journal of Web Information Systems, 20 (2), 129–158. https://doi.org/10.1108/ijwis-10-2023-0192

- Jomaa, L. H., McDonnell, E., Probart, C. (2011). School feeding programs in developing countries: impacts on children’s health and educational outcomes. Nutrition Reviews, 69 (2), 83–98. https://doi.org/10.1111/j.1753-4887.2010.00369.x

- Mhurchu, C. N., Gorton, D., Turley, M., Jiang, Y., Michie, J., Maddison, R., Hattie, J. (2012). Effects of a free school breakfast programme on children’s attendance, academic achievement and short-term hunger: results from a stepped-wedge, cluster randomised controlled trial. Journal of Epidemiology and Community Health, 67 (3), 257–264. https://doi.org/10.1136/jech-2012-201540

- Raveenthiranathan, L., Ramanarayanan, V., Thankappan, K. (2024). Impact of free school lunch program on nutritional status and academic outcomes among school children in India: A systematic review. BMJ Open, 14 (7), e080100. https://doi.org/10.1136/bmjopen-2023-080100

- Hamdan, F., Al-Jarrah, F. (2024). Nutritional Health and Its Impact on Students’ Academic Achievement. Journal of Educational and Social Research, 14 (6), 449. https://doi.org/10.36941/jesr-2024-0185

- Salih, M. S., Pasha, S. A. (2024). Utilizing nutritional and lifestyle data for predicting student academic performance: a machine learning approach. Science Journal of University of Zakho, 12 (3), 356–360. https://doi.org/10.25271/sjuoz.2024.12.3.1288

- Zarlis, M., Oktavia, T., Buaton, R., Ernawan, F., Andrian, K. (2023). Minimizing the Number of Stunting Prevalence Using the Euclid Algorithm Clustering Approach. 2023 International Conference of Computer Science and Information Technology (ICOSNIKOM), 1–7. https://doi.org/10.1109/icosnikom60230.2023.10364489

- Kirk, D., Kok, E., Tufano, M., Tekinerdogan, B., Feskens, E. J. M., Camps, G. (2022). Machine Learning in Nutrition Research. Advances in Nutrition, 13 (6), 2573–2589. https://doi.org/10.1093/advances/nmac103

- Umirzakova, S., Abdullaev, M., Mardieva, S., Latipova, N., Muksimova, S. (2024). Simplified Knowledge Distillation for Deep Neural Networks Bridging the Performance Gap with a Novel Teacher–Student Architecture. Electronics, 13 (22), 4530. https://doi.org/10.3390/electronics13224530

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Relita Buaton, Mesra Betty Yel, Novriyenni Novriyenni, Anton Sihombing, Ida Ria Royentina Sidabukke

This work is licensed under a Creative Commons Attribution 4.0 International License.

The consolidation and conditions for the transfer of copyright (identification of authorship) is carried out in the License Agreement. In particular, the authors reserve the right to the authorship of their manuscript and transfer the first publication of this work to the journal under the terms of the Creative Commons CC BY license. At the same time, they have the right to conclude on their own additional agreements concerning the non-exclusive distribution of the work in the form in which it was published by this journal, but provided that the link to the first publication of the article in this journal is preserved.

A license agreement is a document in which the author warrants that he/she owns all copyright for the work (manuscript, article, etc.).

The authors, signing the License Agreement with TECHNOLOGY CENTER PC, have all rights to the further use of their work, provided that they link to our edition in which the work was published.

According to the terms of the License Agreement, the Publisher TECHNOLOGY CENTER PC does not take away your copyrights and receives permission from the authors to use and dissemination of the publication through the world's scientific resources (own electronic resources, scientometric databases, repositories, libraries, etc.).

In the absence of a signed License Agreement or in the absence of this agreement of identifiers allowing to identify the identity of the author, the editors have no right to work with the manuscript.

It is important to remember that there is another type of agreement between authors and publishers – when copyright is transferred from the authors to the publisher. In this case, the authors lose ownership of their work and may not use it in any way.