Devising a neural-network method for assessing the condition of destroyed buildings using images from unmanned aerial vehicles

DOI:

https://doi.org/10.15587/1729-4061.2026.351605Keywords:

UAVs, aerial photography, YOLO, ViT, neural-network classification of damageAbstract

The process of assessing the condition of destroyed buildings using aero photographs acquired from unmanned aerial vehicles (UAVs) has been investigated in this study. The task addressed relates to the fact that post-war monitoring of territories is complicated by the volume of satellite and UAV images, which exceeds the capabilities of expert inspection while the lack of uniform interpretation tools and domain shift reduce the reproducibility of assessment.

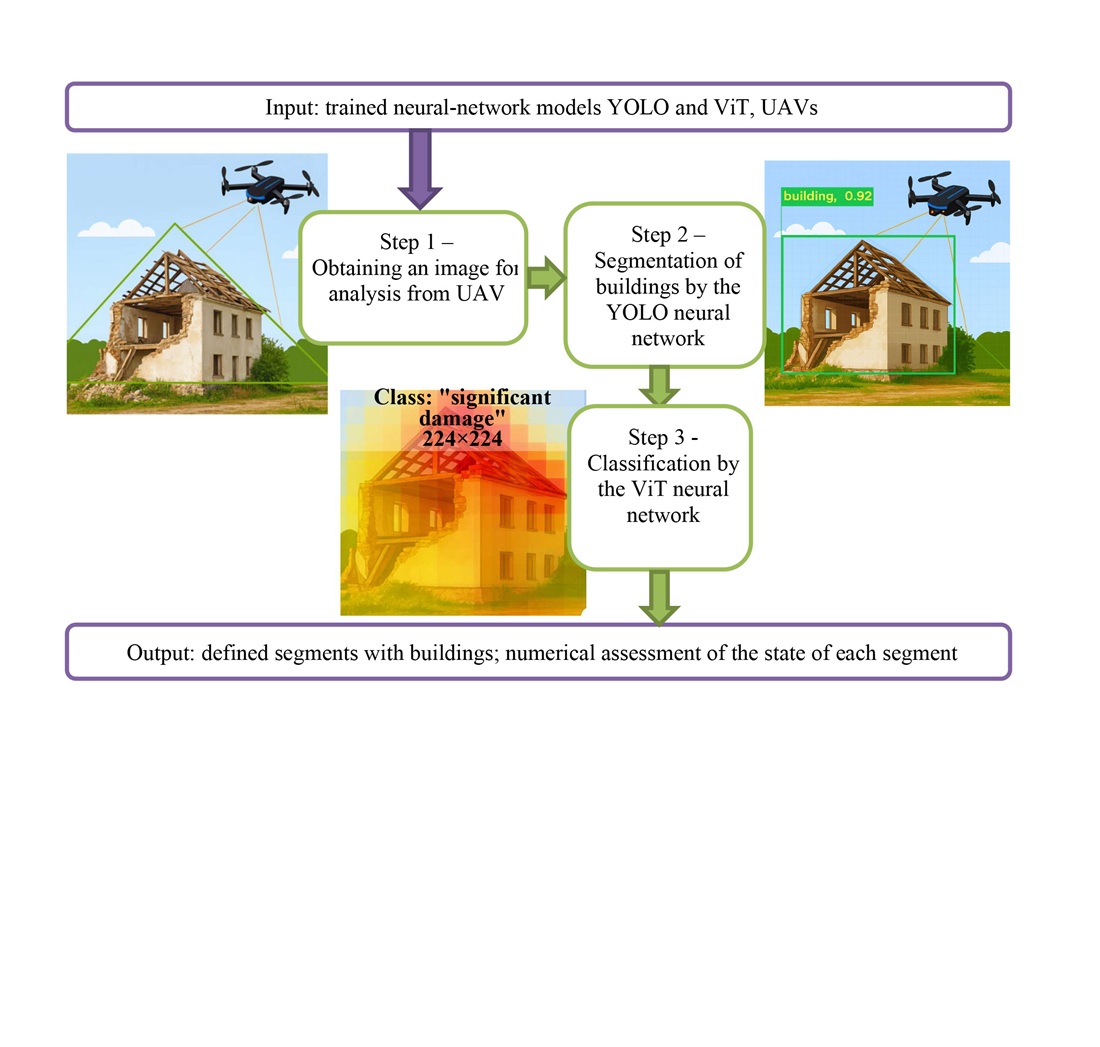

The proposed two-stage neural-network method combines segmentation of buildings and determining the level of building destruction on a four-level damage scale: "absent", "minor", "significant", "destroyed". The "xView2" corpus was used as the source material, supplemented with authentic marked UAV images.

The “You Only Look Once” (YOLO) segmentation models, versions v8s and v11n, were used to extract building contours, and the Vision Transformer (ViT) was used to categorize damage. Experiments were performed in Google Colab (USA) applying PyTorch (USA) and Ultralytics (UK). The Mean Average Precision (mAP) was calculated for segmentation models. The mAP indicators remain acceptable even in complex urban settings.

For the ViT classification model, the Precision, Recall, and F1 values above 0.9 were obtained. The values achieved are attributed to the combination of a two-stage architecture and sample balancing.

The devised method is applicable to satellite and UAV images; unlike existing solutions, it retains stability under domain shift. The resulting models could be implemented as a basic module in geographic information systems and decision support systems, enabling practical use of this study's results. For correct operation, sufficient resolution and representativeness of the training sample are required

References

- Mazurets, O., Sobko, O., Dydo, R., Zalutska, O., Molchanova, M. (2025). Augmented reality audiostream creation using CNN: boosting inclusion and safety for visually impaired people. CEUR Workshop Proceedings, 4004, 347–361. Available at: https://ceur-ws.org/Vol-4004/paper26.pdf

- Krak, I., Sobko, O., Molchanova, M., Tymofiiev, I., Mazurets, O., Barmak, O. (2025). Method for neural network cyberbullying detection in text content with visual analytic. CEUR Workshop Proceedings, 3917, 298–309. Available at: https://ceur-ws.org/Vol-3917/paper57.pdf

- Gu, J., Xie, Z., Zhang, J., He, X. (2024). Advances in Rapid Damage Identification Methods for Post-Disaster Regional Buildings Based on Remote Sensing Images: A Survey. Buildings, 14 (4), 898. https://doi.org/10.3390/buildings14040898

- Xia, H., Wu, J., Yao, J., Zhu, H., Gong, A., Yang, J. et al. (2023). A Deep Learning Application for Building Damage Assessment Using Ultra-High-Resolution Remote Sensing Imagery in Turkey Earthquake. International Journal of Disaster Risk Science, 14 (6), 947–962. https://doi.org/10.1007/s13753-023-00526-6

- Singh, D. K., Hoskere, V. (2023). Post Disaster Damage Assessment Using Ultra-High-Resolution Aerial Imagery with Semi-Supervised Transformers. Sensors, 23 (19), 8235. https://doi.org/10.3390/s23198235

- Tennant, E., Jenkins, S. F., Miller, V., Robertson, R., Wen, B., Yun, S.-H., Taisne, B. (2024). Automating tephra fall building damage assessment using deep learning. Natural Hazards and Earth System Sciences, 24 (12), 4585–4608. https://doi.org/10.5194/nhess-24-4585-2024

- Alsaaran, N., Soudani, A. (2025). Deep Learning Image-Based Classification for Post-Earthquake Damage Level Prediction Using UAVs. Sensors, 25 (17), 5406. https://doi.org/10.3390/s25175406

- Zhuang, X., Tran, T. V., Nguyen-Xuan, H., Rabczuk, T. (2025). Deep learning-based post-earthquake structural damage level recognition. Computers & Structures, 315, 107761 https://doi.org/10.1016/j.compstruc.2025.107761

- Lyu, C., Lin, S., Lynch, A., Zou, Y., Liarokapis, M. (2025). UAV-based deep learning applications for automated inspection of civil infrastructure. Automation in Construction, 177, 106285. https://doi.org/10.1016/j.autcon.2025.106285

- Zha, Q., Yao, Y., Zheng, Y., Ma, W., Zhang, W. (2025). A dataset of building surface defects collected by UAVs for machine learning-based detection. Scientific Data, 12 (1). https://doi.org/10.1038/s41597-025-06318-5

- Molchanova, M., Didur, V., Mazurets, O., Sobko, O., Zakharkevich, O. (2025). Method for construction and demolition waste classification using two-factor neural network image analysis. CEUR Workshop Proceedings, 3970, 168–182. Available at: https://ceur-ws.org/Vol-3970/PAPER14.pdf

- xView2 Challenge Dataset - train and test. Available at: https://www.kaggle.com/datasets/tunguz/xview2-challenge-dataset-train-and-test/data

- Sobko, O., Mazurets, O., Molchanova, M., Krak, I., Barmak, O. (2025). Method for analysis and formation of representative text datasets. CEUR Workshop Proceedings, 3899, 84–98. Available at: https://ceur-ws.org/Vol-3899/paper9.pdf

- Asif, A., Rafique, H., Jadoon, K., Zakwan, M., Mahmood, M. H. (2024). Change-centric building damage assessment across multiple disasters using deep learning. International Journal of Data Science and Analytics, 20 (3), 1915–1931. https://doi.org/10.1007/s41060-024-00577-y

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Oleksandr Mazurets, Maryna Molchanova, Maksim Shurypa, Olena Sobko

This work is licensed under a Creative Commons Attribution 4.0 International License.

The consolidation and conditions for the transfer of copyright (identification of authorship) is carried out in the License Agreement. In particular, the authors reserve the right to the authorship of their manuscript and transfer the first publication of this work to the journal under the terms of the Creative Commons CC BY license. At the same time, they have the right to conclude on their own additional agreements concerning the non-exclusive distribution of the work in the form in which it was published by this journal, but provided that the link to the first publication of the article in this journal is preserved.

A license agreement is a document in which the author warrants that he/she owns all copyright for the work (manuscript, article, etc.).

The authors, signing the License Agreement with TECHNOLOGY CENTER PC, have all rights to the further use of their work, provided that they link to our edition in which the work was published.

According to the terms of the License Agreement, the Publisher TECHNOLOGY CENTER PC does not take away your copyrights and receives permission from the authors to use and dissemination of the publication through the world's scientific resources (own electronic resources, scientometric databases, repositories, libraries, etc.).

In the absence of a signed License Agreement or in the absence of this agreement of identifiers allowing to identify the identity of the author, the editors have no right to work with the manuscript.

It is important to remember that there is another type of agreement between authors and publishers – when copyright is transferred from the authors to the publisher. In this case, the authors lose ownership of their work and may not use it in any way.