Improving speech-to-text for the Indonesian language using a modified transformer

DOI:

https://doi.org/10.15587/1729-4061.2026.350949Keywords:

ASR, modified transformer, SentencePiece, Indonesian dataset, deep learningAbstract

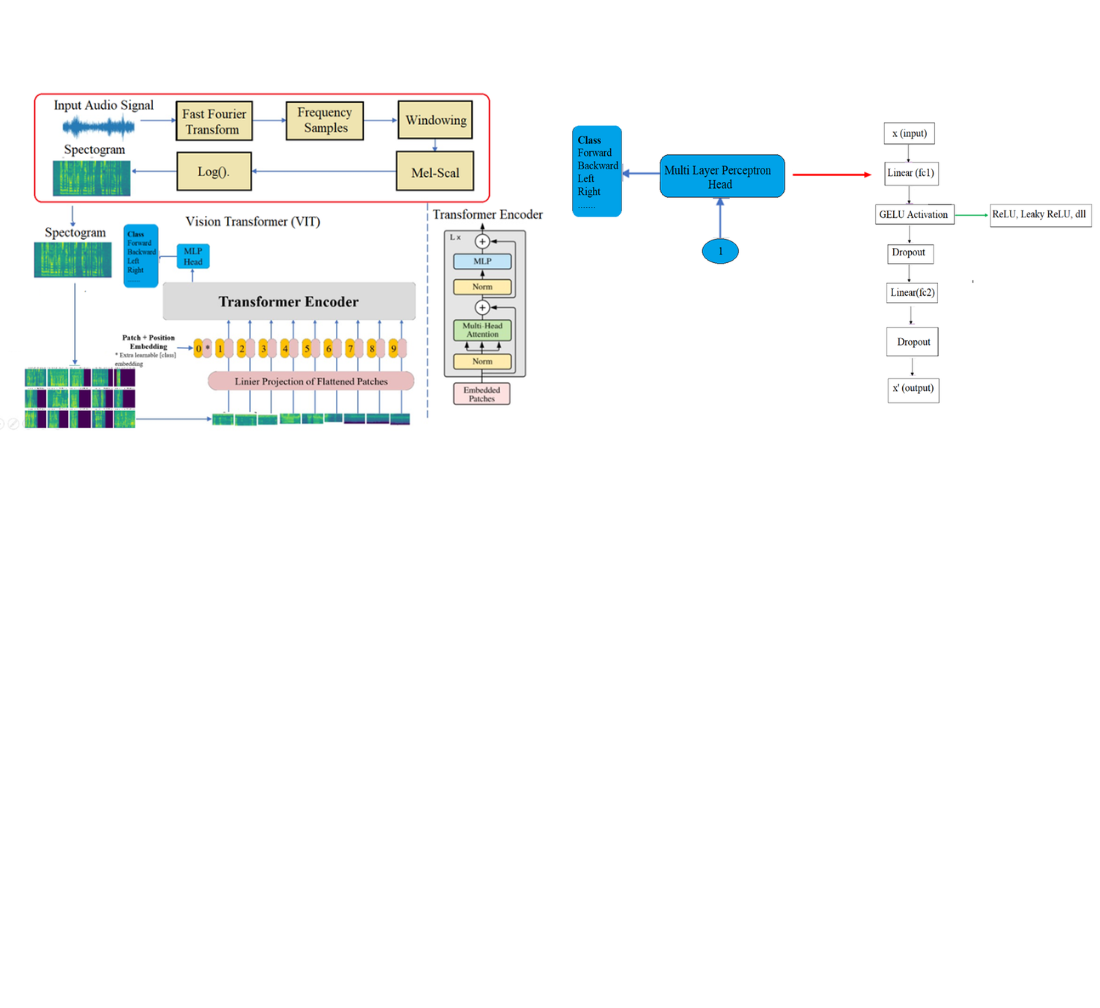

The object of this study is a transformer-based ASR architecture trained using an Indonesian speech dataset consisting of audio recordings and corresponding transcripts. This study examines the development of an Automatic Speech Recognition (ASR) system for Indonesian, which is still classified as a low-resource language, particularly in terms of dataset availability and model performance. The problem addressed in this study is the limited performance of the standard transformer model in accurately recognizing Indonesian speech. To overcome this limitation, an encoder modification integrating convolutional and vision transformer (ViT) blocks was proposed and compared with the baseline model. The data were preprocessed through 16 kHz mono audio conversion, silence segmentation, pre-emphasis filtering, log-Mel spectrogram extraction, normalization, and subword tokenization using SentencePiece with byte pair encoding (BPE). The dataset was divided into training, validation, and testing sets with a ratio of 80:10:10, comprising 63,952, 7,994, and 7,994 samples, respectively. Model generalization was improved using the SpecAugment data augmentation technique. The experimental results show that the standard model achieves a word error rate (WER) of 0.162 and a character error rate (CER) of 0.121, while the modified model reduces the WER to 0.158 and the CER to 0.118. The significance of this finding lies in the improved feature representation produced by the modified encoder, where the convolutional block captures local acoustic patterns and the ViT block enhances global context modeling on the spectrogram. This complementary mechanism explains the reduction in errors at the word level, which is crucial for a reliable speech-to-text system. Therefore, the proposed model can be applied to real-time two-way communication in service robot applications

References

- Loubser, A., De Villiers, P., De Freitas, A. (2024). End-to-end automated speech recognition using a character based small scale transformer architecture. Expert Systems with Applications, 252, 124119. https://doi.org/10.1016/j.eswa.2024.124119

- Ro, J. H., Stahlberg, F., Wu, K., Kumar, S. (2022). Transformer-based Models of Text Normalization for Speech Applications. arXiv. https://doi.org/10.48550/arXiv.2202.00153

- Alastruey, B., Gállego, G. I., Costa-jussà, M. R. (2021). Efficient Transformer for Direct Speech Translation. arXiv. https://doi.org/10.48550/arXiv.2107.03069

- KHu, K., Pang, R., Sainath, T. N., Strohman, T. (2021). Transformer Based Deliberation for Two-Pass Speech Recognition. 2021 IEEE Spoken Language Technology Workshop (SLT), 68–74. https://doi.org/10.1109/slt48900.2021.9383497

- Le, P.-H., Gong, H., Wang, C., Pino, J., Lecouteux, B., Schwab, D. (2023). Pre-training for Speech Translation: CTC Meets Optimal Transport. arXiv. https://doi.org/10.48550/arXiv.2301.11716

- Ahmadian, H., Abidin, T. F., Riza, H., Muchtar, K. (2023). Transformer-Based Indonesian Language Model for Emotion Classification and Sentiment Analysis. 2023 International Conference on Information Technology and Computing (ICITCOM), 209–214. https://doi.org/10.1109/icitcom60176.2023.10442970

- Heryadi, Y., Wijanarko, B. D., Fitria Murad, D., Tho, C., Hashimoto, K. (2022). The Effect of Encoder and Decoder Stack Depth of Transformer Model to Performance of Machine Translator for Low-resource Languages. Proceedings of the International Conference on Industrial Engineering and Operations Management, 2766–2776. https://doi.org/10.46254/ap03.20220479

- Heryadi, Y., Wijanarko, B. D., Fitria Murad, D., Tho, C., Hashimoto, K. (2023). Revalidating the Encoder-Decoder Depths and Activation Function to Find Optimum Vanilla Transformer Model. 2023 International Conference on Computer Science, Information Technology and Engineering (ICCoSITE), 162–167. https://doi.org/10.1109/iccosite57641.2023.10127790

- Sonata, I., Heryadi, Y., Tho, C. (2023). Topic Segmentation using Transformer Model for Indonesian Text. Procedia Computer Science, 227, 159–167. https://doi.org/10.1016/j.procs.2023.10.513

- Suyanto, S., Arifianto, A., Sirwan, A., Rizaendra, A. P. (2020). End-to-End Speech Recognition Models for a Low-Resourced Indonesian Language. 2020 8th International Conference on Information and Communication Technology (ICoICT), 1–6. https://doi.org/10.1109/icoict49345.2020.9166346

- Sonata, I. (2023). Automatic Speech Recognition in Indonesian Using the Transformer Model. 2023 International Conference on Informatics, Multimedia, Cyber and Informations System (ICIMCIS), 263–266. https://doi.org/10.1109/icimcis60089.2023.10349042

- Wijanarko, B. D., Fitria Murad, D., Heryadi, Y., Tho, C., Hashimoto, K. (2023). Exploring the Effect of Activation Function on Transformer Model Performance for Official Announcement Translator from Indonesian to Sundanese Languages. 2023 International Conference on Computer Science, Information Technology and Engineering (ICCoSITE), 827–831. https://doi.org/10.1109/iccosite57641.2023.10127770

- Wongso, W., Setiawan, D. S., Suhartono, D. (2021). Causal and Masked Language Modeling of Javanese Language using Transformer-based Architectures. 2021 International Conference on Advanced Computer Science and Information Systems (ICACSIS), 1–7. https://doi.org/10.1109/icacsis53237.2021.9631331

- Fuadi, M., Wibawa, A. D., Sumpeno, S. (2023). idT5: Indonesian Version of Multilingual T5 Transformer. arXiv. https://doi.org/10.48550/arXiv.2302.00856

- Musyafa, A., Gao, Y., Solyman, A., Wu, C., Khan, S. (2022). Automatic Correction of Indonesian Grammatical Errors Based on Transformer. Applied Sciences, 12 (20), 10380. https://doi.org/10.3390/app122010380

- Fudholi, D. H., Nayoan, R. A. N. (2022). The Role of Transformer-based Image Captioning for Indoor Environment Visual Understanding. International Journal of Computing and Digital Systems, 12 (3), 479–488. https://doi.org/10.12785/ijcds/120138

- Aditya Rachman, A., Suyanto, S., Rachmawati, E. (2021). Leveraging CNN and Bi-LSTM in Indonesian G2P Using Transformer. 2021 13th International Conference on Machine Learning and Computing, 161–165. https://doi.org/10.1145/3457682.3457706

- Sirwan, A., Thama, K. A., Suyanto, S. (2022). Indonesian Automatic Speech Recognition Based on End-to-end Deep Learning Model. 2022 IEEE International Conference on Cybernetics and Computational Intelligence (CyberneticsCom), 410–415. https://doi.org/10.1109/cyberneticscom55287.2022.9865253

- Warto, Muljono, Purwanto, Noersasongko, E. (2023). Improving Named Entity Recognition in Bahasa Indonesia with Transformer-Word2Vec-CNN-Attention Model. International Journal of Intelligent Engineering and Systems, 16 (4), 655–668. https://doi.org/10.22266/ijies2023.0831.53

- Hutama, L. B., Suhartono, D. (2022). Indonesian Hoax News Classification with Multilingual Transformer Model and BERTopic. Informatica, 46 (8). https://doi.org/10.31449/inf.v46i8.4336

- Lin, T., Wang, Y., Liu, X., Qiu, X. (2022). A survey of transformers. AI Open, 3, 111–132. https://doi.org/10.1016/j.aiopen.2022.10.001

- Xu, P., Zhu, X., Clifton, D. A. (2023). Multimodal Learning With Transformers: A Survey. IEEE Transactions on Pattern Analysis and Machine Intelligence, 45 (10), 12113–12132. https://doi.org/10.1109/tpami.2023.3275156

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Ratna Atika, Suci Dwijayanti, Bhakti Yudho Suprapto

This work is licensed under a Creative Commons Attribution 4.0 International License.

The consolidation and conditions for the transfer of copyright (identification of authorship) is carried out in the License Agreement. In particular, the authors reserve the right to the authorship of their manuscript and transfer the first publication of this work to the journal under the terms of the Creative Commons CC BY license. At the same time, they have the right to conclude on their own additional agreements concerning the non-exclusive distribution of the work in the form in which it was published by this journal, but provided that the link to the first publication of the article in this journal is preserved.

A license agreement is a document in which the author warrants that he/she owns all copyright for the work (manuscript, article, etc.).

The authors, signing the License Agreement with TECHNOLOGY CENTER PC, have all rights to the further use of their work, provided that they link to our edition in which the work was published.

According to the terms of the License Agreement, the Publisher TECHNOLOGY CENTER PC does not take away your copyrights and receives permission from the authors to use and dissemination of the publication through the world's scientific resources (own electronic resources, scientometric databases, repositories, libraries, etc.).

In the absence of a signed License Agreement or in the absence of this agreement of identifiers allowing to identify the identity of the author, the editors have no right to work with the manuscript.

It is important to remember that there is another type of agreement between authors and publishers – when copyright is transferred from the authors to the publisher. In this case, the authors lose ownership of their work and may not use it in any way.